This project aims to develop smart life traps that use image-recognition. Thus we prevent unwanted bycatches of protected species as European beaver or otter and only catch target species like coypu and muskrat. Recently we made good progress on the Smart Life Trap project. The first catch was made in the field.

Image-recognition is dependent of many pictures; we received lots from the field. Thanks to people from the regional water authorities and volunteers from zoos as well.

New model

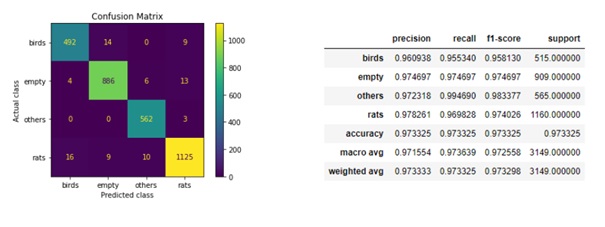

A new model was made and tested. Overall the new model already makes good predictions. The Artificial intelligence specialists made a model that is quite accurate. Below is a ‘confusion matrix’. It shows the number of accurate predictions: mainly correct ones. It predicts ‘Rat group’ when it is actually a ‘Rat group’ indeed and the same with birds and the ‘Others group’ category. However, it still made some incorrect analysis. This was improved in the last months of 2021, with new photos from the field.

Real life tests and results

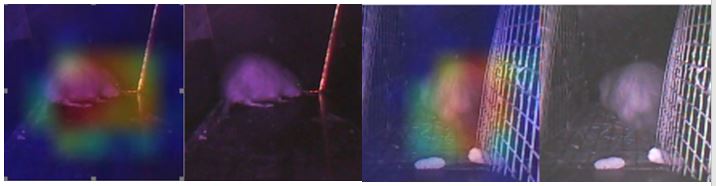

Below are photos with a ‘heatmap’ overlay. The more yellow/red the image is, the more this part of the picture was used for recognition. We can see from the photos that it concentrates mainly on the actual animal even when other objects like leaves or food are in the picture as well. And recognition succeeds at night as well.

Many images

In the past months we also succeeded in entering many images of otters and beavers, with the help of a German otter centre (Otter-Zentrum Hankensbüttel) amongst others. This will make the model even more accurate, even when animals look like the target species but are different.

Camera

The camera was built into 5 traps and tested in different settings, day and night. The first actual catch in the field occurred in December: a muskrat. As a control set-up, we use 24h wild game camera’s to observe the test traps and the animals entering it.

50 Smart Life Traps

In the first months of 2022 a total of 50 Smart Life Traps will be deployed across national borders: Germany (20), Belgium (5) and the Netherlands (25).